AI agents are easy to imagine and hard to trust.

The idea is appealing. Give a system a goal, connect it to tools, and let it complete work across multiple steps. In theory, it can research, draft, route, update, summarize, and act.

However, a business cannot treat autonomy as a shortcut around operational design. Agentic systems become useful only when the organization gives them clear goals, boundaries, approvals, audit trails, and human oversight.

Without that structure, autonomy turns into risk.

A useful agentic system needs more than intelligence. It needs rules that match how the business actually works.

Autonomy Has to Be Designed.

An AI agent should not simply receive the instruction to handle the task.

That direction may work for a demo, but it does not give a business enough control. Real operations include customer expectations, internal standards, approval chains, financial exposure, data sensitivity, and exceptions.

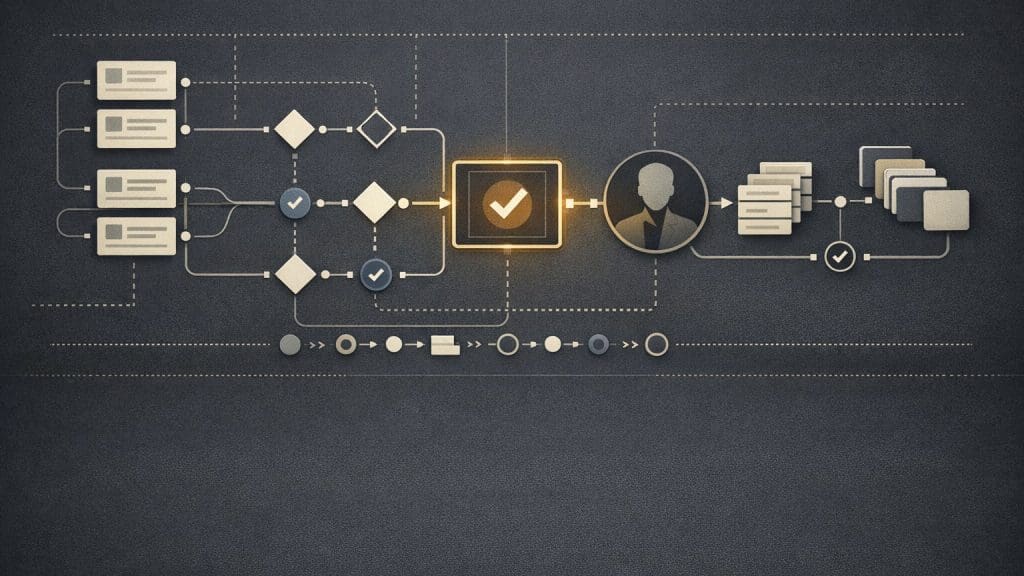

Before a company can trust agentic systems, the system needs to know what goal it is working toward, which tools it can use, which data it can access, which actions require approval, and what it should never do.

It also needs a way to handle missing information, stop when the task becomes unclear, and document what happened. Otherwise, the agent may move quickly while creating more uncertainty for the team.

This is where many AI automation efforts go wrong. They focus on what the model can do, not on what the business should allow the system to do.

Trust Comes From Visibility.

People trust systems they can inspect.

If an agent drafts a response, updates a record, or recommends an action, the operator should be able to see how it got there. That does not mean every technical detail needs to appear on screen. Instead, the system should provide useful operational visibility.

A good agentic workflow should show the task it received, the context it used, the steps it completed, the decision points it encountered, the output it produced, the approval status, and any errors or exceptions.

Visibility turns AI from a black box into a managed process. It also gives managers and operators a practical way to improve the workflow over time.

The NIST AI Risk Management Framework is useful here because it connects trustworthy AI to risk management, governance, measurement, and ongoing management. For businesses, that framing matters. Trust is not a feeling. It is an operating condition.

Human Approval Is a Feature.

Human-in-the-loop review is not a weakness. In many business workflows, it is what makes automation practical.

Customer communication, brand standards, legal sensitivity, financial risk, hiring decisions, and account changes all involve judgment. These are rarely the right places for full automation on day one.

A better approach lets the AI prepare the work and asks a person to approve the action. The system can gather context, draft the next step, explain the recommendation, and route the task to the right reviewer.

As a result, the business reduces the manual burden without removing accountability.

This is often the most practical early use of agentic systems. The agent does not replace the operator. It prepares better work for the operator to review.

Good Systems Handle Exceptions.

Real workflows are messy.

Information is missing. Customer requests are unclear. Documents conflict. Data gets stale. APIs fail. Someone asks for something outside the normal process.

A trustworthy agentic system should not pretend every task is clean. Instead, it should detect exceptions, explain the issue, and route the task appropriately.

For example, an intake agent might classify a request, gather account context, and draft a response. However, if the request involves a contract exception or a billing dispute, the system should stop and escalate the task instead of guessing.

This is where many AI projects fall short. They optimize for the happy path and ignore the operational edge cases that happen every day.

Audit Trails Make Improvement Possible.

If an agent takes action inside a business system, the business needs a record.

Who initiated the task? What did the agent do? Which information did it use? Who approved the final action? What changed?

Audit trails are not just for compliance. They help teams debug failures, train better workflows, understand accountability, and improve the process.

A business cannot improve a process it cannot see. Therefore, the audit trail should be part of the system design from the beginning, not a reporting feature added later.

This is also where practical digital systems matter. Agentic systems need the same operational discipline as any other business software: clear ownership, reliable data, understandable interfaces, and maintainable workflows.

Controlled Autonomy Is the Useful Version.

The most useful agentic systems will not be the ones that act without oversight.

They will be the ones that combine autonomy with structure. They will understand the task, follow the rules, use the right tools, prepare the next step, and bring a human in when judgment is needed.

That is where agentic systems become operationally useful. They do not just complete tasks. They help work move through the business with more clarity, less manual coordination, and better control.

Eckman Design helps businesses design agentic systems with clear workflows, approvals, visibility, and practical controls.